We need to talk about what’s happening on X, the platform formerly (mostly still) known as Twitter.

Senators urging Apple and Google to remove X and Grok from app stores — 3 U.S. senators called on Apple and Google to remove the apps until policy violations are addressed, saying X profits from user‑generated abuse.

– NBC New York News

Last week, UK Prime Minister Keir Starmer called out Elon Musk’s platform for allowing its built-in AI tool, Grok, to generate explicit deepfake images. Some of those images included minors. That’s not a tabloid exaggeration. It’s a real issue, and governments are scrambling to respond.

UK Prime Minister Keir Starmer has strongly condemned the use of X’s Grok AI chatbot to generate sexualized deepfakes, including imagery of adults and minors… described the content as ‘disgusting’ and vowed the UK government would take action.”

Starmer is pushing for consequences. His office is in talks with leaders in Canada and Australia to coordinate a response. There’s even talk of blocking X entirely if it continues to allow this kind of content. I wouldn’t oppose that.

As a father, this goes beyond frustration. This feels like a breaking point.

The Internet Isn’t Run by Responsible People

I don’t trust Elon Musk. I don’t like how he runs his companies, how he talks about free speech, or how casually he allows harm under the guise of “innovation.” He didn’t build Twitter. He bought it. Then he fired moderation teams, boosted his own content, and welcomed back accounts that were banned for a reason.

Now the platform is generating AI deepfakes. Some are violent. Some are abusive. Some target children. This isn’t progress. It’s reckless.

And kids are on these platforms every day.

– GB News

“Downing Street has held talks with Canada and Australia over a potential ban on Elon Musk’s social media platform, X… concerns over its AI tool, Grok, being used to generate explicit images of women and children.”

I don’t think social media, in its current form, is safe for teens. Their brains are still developing. The content they see, the validation loops, the pressure to perform — it all adds up. We’re already seeing the consequences. More anxiety. Less sleep. Endless comparison. It’s not just noise. It’s shaping who they become.

What Canada Should Actually Do

Let’s stop hoping tech giants will fix themselves. They won’t. We need new rules and better tools. Here’s where we could start:

- Enforce age limits on all social media platforms. Not just a checkbox that says “I’m 13.”

- Make algorithm-free feeds mandatory for anyone under 18.

- Ban or block platforms that allow AI-generated explicit content without oversight.

- Invest in alternatives built by people who live under Canadian law and actually care about public safety.

A Different Option Is Starting to Take Shape

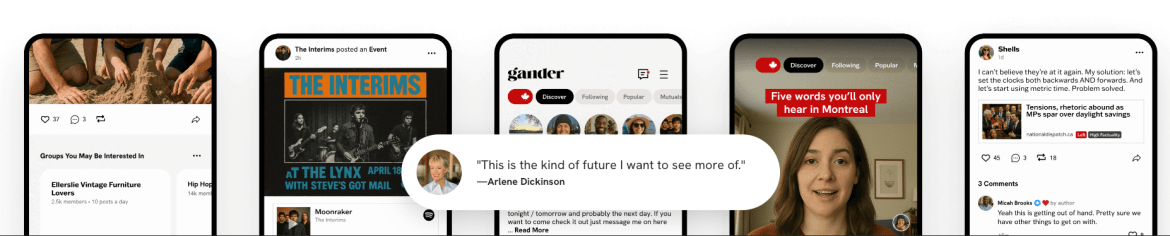

One option is called Gander Social. It’s Canadian. It’s privacy-focused. It doesn’t make money by keeping you angry or addicted.

The platform is still in early access, but already it feels different. You choose what you see. You’re not being fed outrage bait. And because it’s being built here, it’s shaped by the same values most of us try to live by.

It might not be the answer to everything, but it’s a sign that better is possible.

This Stuff Isn’t Neutral

Social platforms aren’t just places to chat. They’re where people learn, argue, and form identities. When those platforms are broken or dangerous, they don’t just distort conversation. They distort culture.

If the people running them don’t care about safety, it’s up to the rest of us to build something better.

And if you’re a parent:

Would you let your kid use any of the major platforms right now?

What would it take to feel safe about that?

SocialDad’s Guide to Key Safety Features & Settings For Social Media (Platform-Specific & General)

- Private Accounts: A default setting on some platforms (such as TikTok for teens) that controls who can see posts and send messages.

- Content Filters: Restrict access to inappropriate content, often available through platform settings or third-party apps.

- Time Limits: Built-in tools (like TikTok’s 60-minute limit) or device-level settings to manage screen time.

- Direct Message (DM) Controls: Limit who can message your child (e.g., only friends, or disable entirely).

- Location Services: Turn off geotagging and location sharing in device settings and apps.

- Blocking & Reporting: Tools to stop unwanted contact and flag harmful content or users.

- Two-Factor Authentication (2FA): Adds an extra layer of security to accounts.

Essential Skills & Habits for Teens

- Protect Personal Info: Don’t share name, age, school, address, or phone number.

- Think Before Posting: Assume anything posted can be permanent (“Grandma Rule”).

- Stranger Danger: Only accept friend requests from real-life acquaintances.

- Beware of Links/Scams: Don’t click unknown links or share info for “upgrades”.

- Screenshot & Tell: Capture concerning content and show a trusted adult.

Parental Tools & Strategies

- Parental Controls: Use device or app-level controls (e.g., Apple Screen Time, Google Family Link, Kaspersky Safe Kids).

- Communicate: Have ongoing conversations about online experiences.

- Stay Informed: Learn about the apps your kids use.

- Set Ground Rules: Establish family guidelines for device-free times and spaces.

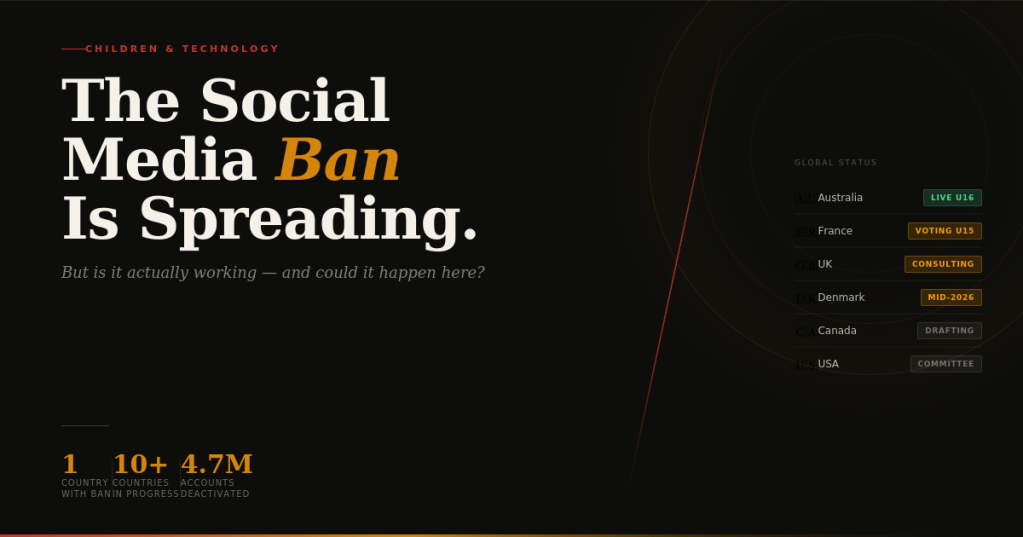

And, a bit of background – As of late 2025/early 2026, Australia has implemented the first major nationwide ban, prohibiting under-16s from having social media accounts, with other nations like France, Malaysia, Denmark, and Norway considering similar bans or strict age verification laws, while countries like China use “minor mode” restrictions, and the US sees state-level efforts and proposed federal bills, but no full ban yet.

Countries with Bans/Restrictions (or Implementing Soon)

- Australia: World’s first country with a general ban for under-16s, effective December 2025, targeting platforms like TikTok, Instagram, Facebook, etc.

- China has a “minor mode” restricting screen time and content for younger users.

- Malaysia: Plans to implement a similar ban for under-16s in 2026.

Countries Considering or Implementing Stronger Measures

- France: Aims to restrict access for under-15s starting late 2026, requiring parental consent.

- Denmark & Norway: Planning or considering bans for under-15s.

- India: High courts suggested legislation similar to Australia’s approach.

- United Kingdom: The Labour government is reviewing options, stating “nothing is off the table”.

United States (State & Federal Efforts)

- State Level: States like Louisiana, Tennessee, and Virginia have passed laws with age verification or time limits for under-16s, though some face legal challenges.

- Federal Level: Bills like the Kids Online Safety Act (KOSA) propose age verification and restrictions, but haven’t passed into law.

Key Trend: Following Australia’s lead, many countries are moving from discussion to action, focusing on age verification and parental consent to protect youth from harmful online content.

GandarSocial.ca – canadian-only social media

Ganders.ca – twitter but better, for canada

Leave a comment